In recent weeks, there’s been a lot of discussion about Facebook Privacy and who is to blame/what can be done about it. Facebook has taken the brunt of that blame because they have made mistakes in the way it handles some user data. So yes, they could have done a lot of things better. And Yes, it’s not a good position to be in for them, but will users really move away from such a dominant platform because of this and do they really understand the problem?

But really, is it totally Facebook’s fault? In this article, I’ve tried to break down what has happened. What the impact is on users’ privacy. What Facebook is doing about it now and long-term. Looking at if this could happen to other companies with data on users. And trying to figure out where we go from here with personal Privacy.

What has caused this

Let’s start back at when Facebook Connect and the associated APIs – for integrating Facebook data into your own – were first conceived. For a long time, users have accepted how easy this service makes signing up for things like applications and competitions. One click and you’re done. What users don’t think about is what data they are giving away.

Table of Contents

ToggleAnd that’s the issue here. Users were very happy to click a connect button and get an easy entry. But what they didn’t consider is what would happen to that data.

Facebook Connect was an absolutely genius play from Facebook and one of the reasons for their massive growth. They were suddenly connected to a massive amount of the internet which encouraged signs up beyond compare. And they have continued to make it better and easier to use so more companies can access it.

And that’s the problem. It’s become so routine that everyone has become too comfortable with it. What they have forgotten is that these companies are advertising/data-funded businesses. It’s in their interest to do as much as possible with the data.

What’s the main issue that triggered this?

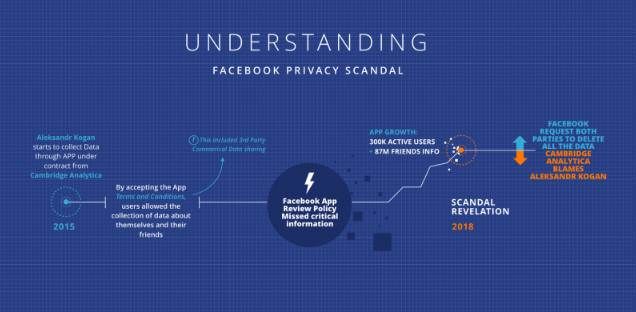

Everything here was triggered by a company called Cambridge Analytica which was Hoovering up data on a lot of users, without their direct consent.

Fortune has a good 10 Questions Answered piece on what happened, but the summary answer goes like this:

- Cambridge Analytica paid an American researcher called Aleksandr Kogan to set up an app to collect data in 2015. The main goal of the app was to collect information on the users and their likes by paying them a small amount of money. But, the app went further and also included their friends which is where things went too awry.

- The policy for the app that the users had to accept made it clear that it would collect your data and your friends’ data

- That policy also made it clear that it would share that data commercially. E.G. with Cambridge Analytica.

- When the app was created, Facebook would have had to review it and the policy before approving it. Somehow, they missed the above regarding sharing it commercially.

- Subsequently, the app went live and some 300k users downloaded and completed the app. By extension that meant, that allowing for privacy settings, between 50 and 87m friends were also accessed.

- The app data was then shared, as agreed, with Cambridge Analytica who have tried to say the whole thing on the researcher, even though they paid him to do it for them.

- After Facebook found out, they approached both parties and requested them to delete the data. It’s still not clear if they honored this request.

There is more to it, but that’s not part of this article. Needless to say users were too trusting of Facebook checking what an app does with data more thoroughly. And also that they should have read the fine print that they agreed to.

The long and the short of it is that the users had granted third-party access to their data a bit too freely. And, that they also didn’t understand or check their Facebook privacy settings as well as they should have.

And as we all have seen, this has escalated to a review by Congress. You can get the highlights of this over on the Wall Street Journal.

Facebook Privacy scandal with Cambridge Analytica timeline infographic

Well, actually… unfortunately not. Researchers recently discovered that there’s been some abuse of the JavaScript login scripts used by most websites.

The issue was first reported by Techcrunch and subsequently confirmed by Facebook. It was found that 434 of the top million websites had 3rd party trackers that were intercepting and using Facebook data in violation of their terms of service.

What does that actually mean? Well, when you do a Facebook Connect to a 3rd party, some information is sent back to the browser. These trackers were able to intercept some of that data and glean additional information on those users.

Facebook has confirmed the issue with some immediate changes to reduce the impact on users whilst they fix it more permanently.

That’s all folks! Is this privacy scandal the end of Facebook and Social Media?

So how bad is it for privacy, really?

It’s bad in the sense that a lot of data was accessed and shared and that the way it was done was not transparent. Also, once it had happened, there was nothing the user could do about it. But what is worse is that the users didn’t fully appreciate – or check – what they were agreeing to in the first place.

To clarify, I’m not saying that Facebook is blameless. Far from it. They should have made sure the application disclosed what it was going to do with the data more clearly.

But that leads to a few bigger issues including the fact that users do not read privacy policies properly. And they don’t check the Facebook privacy settings enough. Also, they don’t go back and check what applications have access to their data.

On that last point, when was the last time you went and checked the page on Facebook that lists all the applications that have access to your data? Why don’t you check now? It’s here. One thing that used to be the case was that you could request long-lived tokens. This meant that an app – or rather app developer – could go back in at any time to access data.

What is Facebook doing to fix it?

Facebook has, as you would expect, been all over this like a rash. The response has been knee-jerk in places, for better or worse. And in others prompted a much-needed review.

Immediate Breaking Changes

The first and most problematic response was to immediately deprecate at-risk and old APIs on both Facebook and Instagram. Whilst this reduced risk it has broken a large number of products that were in the process of migrating to the new Facebook-built Graph APIS from Instagram’s old, but still available APIS.

And that was compounded by their second reaction which was to stop accepting new App Submissions for 90 days whilst they review the process. Again, this makes sense because the Cambridge Analytica App made it through review.

But, the API deprecation and stopping reviews have, for those apps in the process of migrating, been a massive disruption for businesses – Metigy included. There are lots of articles discussing the impact on developers, but Techcrunch covers it well in their article.

Another change that has quite an impact is to reduce the lifespan of tokens. Currently, applications can get long-lived tokens that allow apps to go back in and access data repeatedly on the users behalf. This is good for services like Metigy, but for short-lived quiz applications, they absolutely do not need to access data more than once in a short time frame.

Another response has been setting up a way for users to check if their data was accessed by the offending application as well as starting to notify those users.

And they have been actively encouraging users to check their security settings. Now in Facebook’s defense, they’ve been doing this for a long time, but users ignore these ‘nags’. It’s a tricky situation for these companies. Google is another example of a company that continually encourages users to confirm their privacy settings. People have a habit of ignoring it, no matter how east these companies make it for them. Ironically, this is probably a side effect of lower attention spans that the internet and Social Media have created.

They have also just announced changes to their Javascript Login scripts to address some of the intercepted data issues.

Longer-term Proposed Changes

So now that the knee-jerk reaction has been dealt with, let’s look at Facebook’s longer-term plans. They’ve already announced the results of a few of these.

The first one that was already touched on is reviewing the application review process. For those that don’t know what this is, to get more data from Facebook, you need to apply for that access which is in the form of the permissions dialogue for users. That process involves having a test version of the application, screenshots, a written process flow, and full privacy and terms of service. It’s not simple and often requires some refinement, but it ensures that access is not given out to everyone. Remember, the end user still has to accept these permissions and can reject some of them

But Facebook wants to take this further and get a deeper understanding. It’s not clear what this means, but at a guess, it will extend to highlighting how the data will be stored, how long for, and if it will be shared. All things that should be part of the privacy and Ts & Cs for a site.

Going further still, they have also said that existing applications that were previously approved will need to re-apply. That will be a bit task, but again, Facebook is right to re-visit applications periodically and ensure continued compliance.

And in the last week, they have also announced a reduction on what Advertisers can get access to including deprecating Partner Categories. If you don’t know what that is, its data sourced from 3rd party data brokers to help advertisers do better segment targeting. It was launched in 2013 to boost Facebook’s own targeting data.

I’m fairly certain that there will be more changes to come over the following weeks as they review more and more of what has happened.

Is Facebook the only Company with this kind of data?

So, I thought this was a good question to ask. Stepping aside from Social Media, is there anyone else who has potentially sensitive user data? Well, the answer is yes, and some of them are probably a lot more sensitive in nature.

First up, we have the obvious ones such as Twitter and Pinterest. Both Social networks with a lot of user behavior data. Probably a lot less sensitive as they are public networks so what people share is generally not as personal. But they will definitely be taking a hard look at their own setups and users I’m sure.

And an obvious one is Google. They are involved in a LOT of things you do every day online now. For them, there seems to be a new privacy issue every day. But for them, it is currently about what they are collecting without permission. As for data sharing, they have APIs and do expose a lot of information which you don’t need to ask permission to gain access to – unlike Facebook. Users do have to approve the access, the same as Facebook, and have T&Cs. So I would be very surprised if they aren’t scrutinizing their own processes to protect themselves.

Something you may not think about, but has existed for a long time, is loyalty cards. Those little plastic store cards that give you free money back. What a great deal! But wait… They’ve been sharing data since their inception. That’s why the stores are so keen to get one into your hand and registered with a real name and address. Think about that. How long have they existed and how long have you had 1,2,20 of them? That goes for frequent flyer programs, etc. Wonder how much information is shared from there.

And finally, the sleeping giants trying not be noticed… Examples, according to Cracked Labs, include Acxiom and Oracle. Both are data brokers and handle massive amounts of transactional payment information from around the globe. Imagine how much can be put together with that information!?

And how about the user fallout?

Not as bad as you’d expect reading all of that. Its like users have come to expect it now, and don’t really worry about the potential cost to them of that shared data. Is it as bad as it sounds? Depends how you take it. Some of the information absolutely shouldn’t have been shared.

A movement was started based on the hashtag #deletefacebook on Twitter. It did start to gain a little traction, but really, it wouldn’t have mattered much. The people that would be sharing that would probably have been considering their future Social Media participation anyway. If you want more background, CNET covered it well in their article.

And users have not really reacted towards checking their Privacy settings as Facebook advised, according to the Wall Street Journal. Facebook also expects there to be very little impact on their revenue.

Facebook appears to be continually trying to educate users on their privacy, similar to Google. But money talks so it will be interesting to see how they find the balance between keeping shareholders and users happy. I think they may have lost sight of their users, but hopefully, Mark Zuckerberg’s 2018 quest to fix Facebook will include addressing these issues!

But at the end of the day, the users gave the company involved permission to do it. So how do you hold people’s hands and stop that?